Vibe Coding Is Killing Open Source, and the Data Proves It

A security proxy for AI coding agents, enforced at the OS level. Register your interest to be notified when we go live.

esc to close

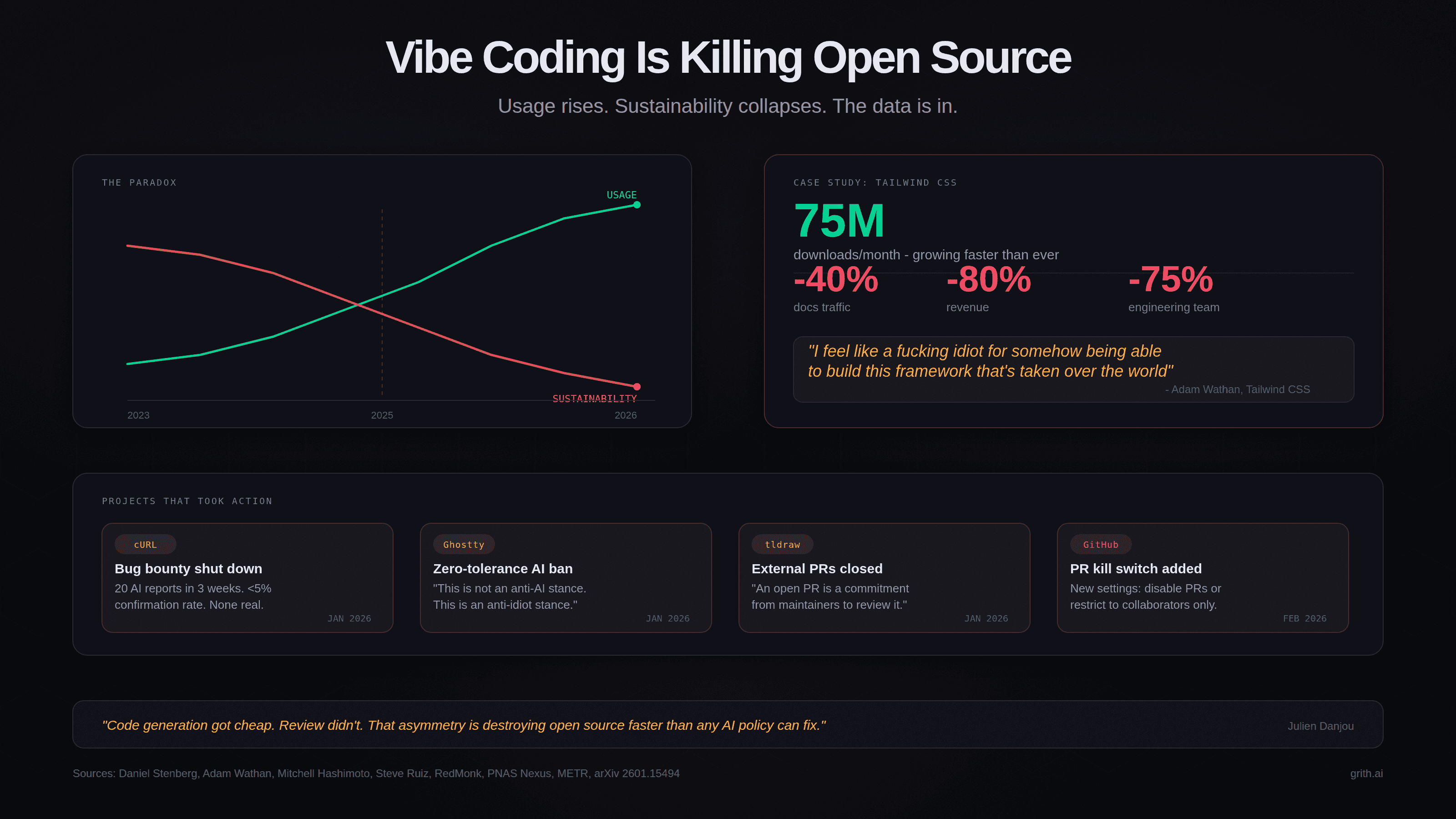

esc to closeDaniel Stenberg shut down the cURL bug bounty on January 31, 2026. The program had been running since 2019, found 87 real vulnerabilities, and paid out over $100,000 in rewards. In its final three weeks, cURL received 20 AI-generated reports. Seven arrived in a single 16-hour period. None described actual vulnerabilities1.

Stenberg's reasoning was straightforward: "remove the incentive for people to submit crap and non-well researched reports to us. AI generated or not." The confirmation rate for incoming reports had dropped from above 15% to below 5%. Not even one in twenty was real1.

cURL is not an isolated case. It is the most visible data point in a pattern that is now affecting open-source projects at every scale.

The maintainer response

Three high-profile projects took defensive action in January and February 2026. Each chose a different mechanism. All cited the same cause.

Ghostty adopted a zero-tolerance policy for unsolicited AI-generated contributions2. Mitchell Hashimoto, the creator, was blunt: "This is not an anti-AI stance. This is an anti-idiot stance." Ghostty's maintainers use AI tools daily. The problem is not AI-assisted development - it is drive-by contributions from people who cannot explain the code they are submitting. Hashimoto later launched Vouch, a trust-based system where contributors must be vouched for before submitting code. Unvouched users cannot contribute. Bad actors are banned across participating projects3.

tldraw began automatically closing all pull requests from external contributors4. Steve Ruiz, the project lead, explained: "We've recently seen a significant increase in contributions generated entirely by AI tools. While some of these pull requests are formally correct, most suffer from incomplete or misleading context, misunderstanding of the codebase, and little to no follow-up engagement from their authors." An open PR represents a commitment from maintainers to review it carefully. Ruiz decided the cost of that commitment was no longer worth bearing for unsolicited contributions.

GitHub itself shipped two new repository settings in February 2026: the ability to disable pull requests entirely, or restrict them to collaborators only5. This was a direct response to maintainer complaints. When the platform adds kill switches, the problem is structural.

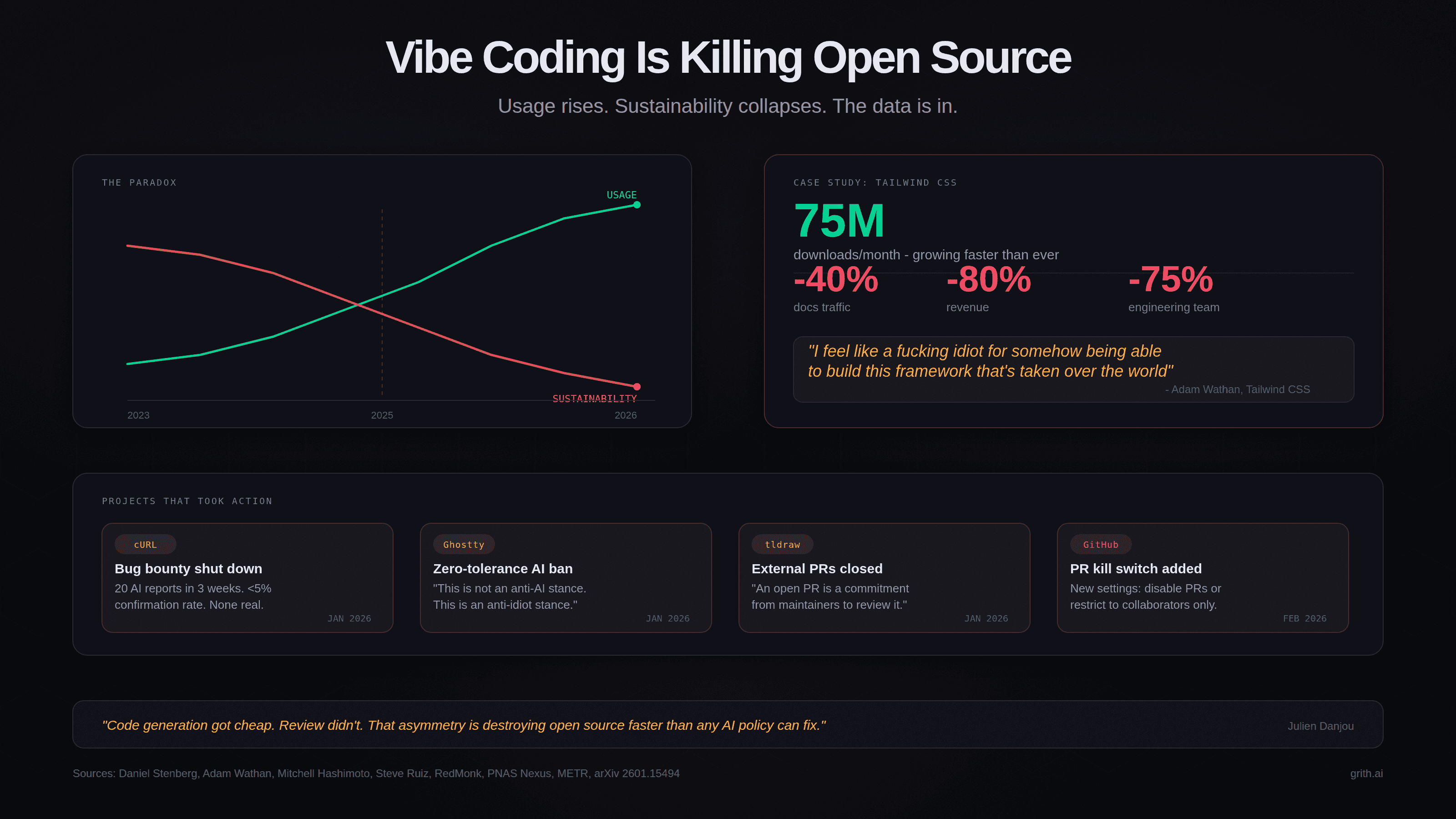

The asymmetry problem

Kate Holterhoff at RedMonk named this pattern "AI Slopageddon" in February 20266. The economics are simple: code generation got cheap but code review did not.

A contributor can paste an issue into an AI tool, get a plausible-looking patch, and submit it in under a minute. The maintainer still needs 30-60 minutes to review it properly - understanding context, checking edge cases, verifying it does not break existing behaviour, and writing feedback. When the contributor has no understanding of the codebase and no intention of iterating, that review time is wasted.

Xavier Portilla Edo, infrastructure lead at Voiceflow and Genkit core team member, quantified it: "1 in 10 AI-generated pull requests is legitimate. The other nine waste a maintainer's time"7.

The filter that open source historically relied on was effort. Writing a patch required understanding the codebase, which required reading the code, which required time and skill. That filter selected for people who cared enough to invest the effort. AI broke that filter. Now anyone can generate a plausible-looking contribution with zero understanding and zero effort6.

The Tailwind paradox

The most striking illustration of the economic breakdown comes from Tailwind CSS. On January 7, 2026, Adam Wathan - Tailwind's creator - disclosed the following numbers in a GitHub discussion8:

- Downloads: 75 million per month, growing faster than ever

- Documentation traffic: Down approximately 40% from peak, despite Tailwind being roughly three times as popular as it was at peak traffic

- Revenue: Down approximately 80%

- Team: 75% of engineers laid off (from four to one)

Wathan was candid: "I feel like a fucking idiot for somehow being able to build this CSS framework that's taken over the world and it's used by everything and it's super popular, but I can't figure out how to have it make enough money that eight people can work on it."

The mechanism is straightforward. AI coding tools generate Tailwind classes directly. Developers no longer visit the documentation to look up utility names, discover components, or browse examples. Documentation traffic was the funnel to Tailwind UI and other paid products. The funnel collapsed. Usage went up. Revenue went down.

This is not a Tailwind-specific problem. It is the economic model of open source breaking.

The feedback loop

A peer-reviewed paper by Koren, Bekes, Hinz, and Lohmann - "Vibe Coding Kills Open Source" - formalises this dynamic with an endogenous entry model9.

The core finding: vibe coding raises productivity by lowering the cost of using existing open-source code. But it simultaneously weakens the user engagement through which maintainers earn returns - documentation visits, community participation, bug reports, and the visibility that drives sponsorship and commercial products.

The feedback loop works like this:

- AI tools consume open-source code as training data and generate it as output

- Developers use AI tools instead of engaging directly with projects

- Documentation traffic, forum activity, and community participation decline

- Revenue streams tied to engagement (ads, sponsorships, freemium products) collapse

- Maintainers reduce investment or abandon projects

- The pool of maintained, high-quality open source shrinks

- AI tools have less high-quality code to train on and recommend

The paper's conclusion: "Sustaining OSS at its current scale under widespread vibe coding requires major changes in how maintainers are paid"9.

The evidence across the ecosystem

The data points converge from multiple directions.

Stack Overflow: A PNAS Nexus study measured a 25% reduction in user activity within six months of ChatGPT's launch, relative to comparable platforms where ChatGPT access was restricted10. Weekly posts fell from 60,000 to 30,000 between May 2022 and May 2024. The decline affected users of all experience levels.

Code quality: CodeRabbit analysed 470 open-source PRs and found AI-authored changes produced 1.7x more issues per PR than human-only code, with approximately 1.4-1.7x more critical and major findings including business logic mistakes and flawed control flow11. A GitClear analysis of 153 million changed lines found that AI-generated code has a 41% higher churn rate - code reverted or updated within two weeks12.

Developer productivity: The METR study - a randomised controlled trial with experienced open-source developers - found that developers using AI tools took 19% longer to complete tasks. They expected AI to speed them up by 24% and believed afterward that it had sped them up by 20%13. The perception gap is as concerning as the productivity result.

Broader project response: Gentoo Linux forbids AI-generated contributions outright. NetBSD requires prior written approval. Mesa 3D adopted a policy requiring contributors to defend AI-assisted submissions as though entirely their own. The Python Software Foundation's security developer-in-residence reported a surge in "extremely low-quality, spammy, and LLM-hallucinated security reports"14. Craig McLuckie, Kubernetes co-creator, said: "Now we file something as 'good first issue' and in less than 24 hours get absolutely inundated with low quality vibe-coded slop"7.

What this means for developers

This is not an anti-AI argument. Most of the maintainers taking action use AI tools themselves. Stenberg praised a researcher who used AI-assisted tools effectively to find real cURL issues1. Hashimoto's policy explicitly states that Ghostty is "written with plenty of AI assistance"2. Ruiz uses AI tools and expects his team to.

The distinction is between AI as a tool used by someone who understands what they are building, and AI as a replacement for understanding. The first produces better software. The second produces volume that is indistinguishable from quality at a glance but fails under scrutiny - and the cost of that scrutiny falls entirely on maintainers who are already stretched thin.

The open-source ecosystem has always depended on a social contract: contributors invest effort, maintainers invest review time, and the exchange produces software that benefits everyone. AI-generated drive-by contributions break that contract. The effort cost dropped to zero. The review cost stayed the same. And the ratio of signal to noise inverted.

If the current trajectory holds, the projects that survive will be the ones that find new economic models or new gatekeeping mechanisms - or both. The ones that cannot will quietly stop being maintained, and the AI tools that depend on their code will have less to work with.

The irony is structural: the tools that make it easy to use open source are making it hard to sustain open source. That is not a problem any individual maintainer can solve. It is an ecosystem problem, and it needs ecosystem-level answers.

Footnotes

Like this post? Share it.